Yet another org-mode parser

I've been meaning to write a blog for a long time now, but when it comes to actually writing something I just keep putting it off over and over again. Interestingly, this post is, in a way, about just that. I've again procrastinated from writing any posts - this time by instead building an org-mode parser in go. Because that's totally necessary to start writing, d'uh!

You can find the results on github. There are even some examples on github

pages

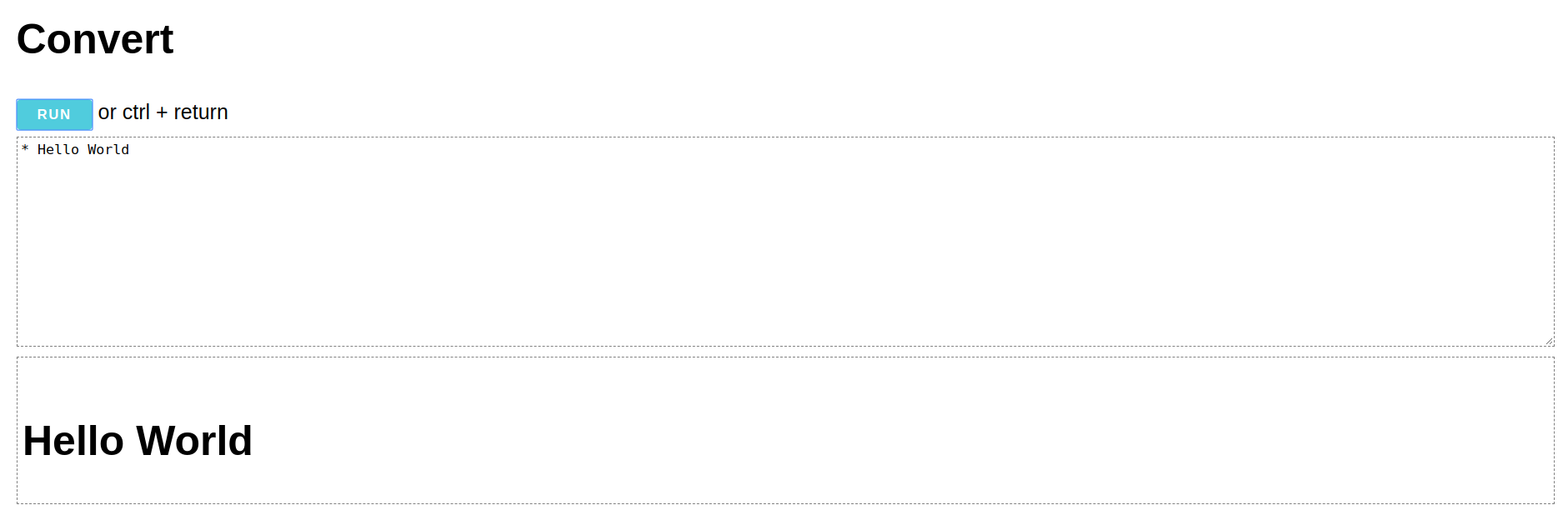

And here it is running in your browser 1 because go 1.11 supports compiling to wasm - how awesome is that? I'm really starting to dig go.

just why…

I love org-mode. It's the best thing since sliced bread. Sadly, blogging with org-mode is harder than it should be. I don't know why, but everything out there that supports org feels overcomplicated or not powerful enough. And I don't even want that much:

- a dev server with live reload

- reasonable org mode support (e.g. nested lists render correctly)

- tags & tag index pages

There are some solutions, but nothing clicked for me. And then there's hugo. Hugo checks all of my requirements - and it's written in go, which I'm currently trying to learn! So hugo would be perfect - if only the org-mode support was any good. But alas, goorgeous has been abandoned and doesn't look like something I would want to maintain.

Luckily, it's not that hard to replace goorgeous in hugo - and this blog

post is rendered using the result of that. Here's the PR. For now I'm just

using go run -tags 'extended' ~/go/src/github.com/gohugoio/hugo serve for my

blog with the changeset applied locally.

what's next

I'm still (very) actively working on it and adding new features as I find a use for them. The plan is to polish things a little more and then adding it to hugo proper. But I'm afraid that won't be a backwards compatible change without quite a lot of work, so I'm not sure that will ever happen.

Let's see if I'll write more stuff now. At least I had a lot of fun learning about parsing and go and got this post out of it.

how

Parsing is done in 3 steps:

- lex input lines into tokens

Parsing the document requires looking at the surrounding context quite a lot (e.g. while inside a drawer we need to check whether the next line is a headline because headlines can't be inside drawers). Doing this rough categorization of lines only once by having a lexing step is really helpful. Also, we need to know the indentation of each line as lists are based on indentation and having that information easily accessible as a field is super helpful. This step is mostly regexp based - match groups give easy access to relevant parts of the line and because the lexing and parsing code is in the same file it's easy enough to keep track of which index into the match corresponds to what. Each token contains a field with the complete match. - parse tokens into nodes

we loop over the list of tokens (not a chan as we need lookback and lookahead and adding that on top of a channel would add complexity and have little benefit considering Org mode files will practically never be big enough to require a streaming architecture) and pass a stop function along that tells us when the parent element is done and we should stop parsing. We can't extract that functionality because some elements don't care whether their parent considers themselves done (e.g. list items containing blocks with unindented lines or list items with paragraphs - the unindented / empty lines normally end the list item but we don't want that). - parse inline markup of certain nodes

some nodes like paragraphs and certain kind of blocks contain inline markup likecodeand bold and stuff. As that markup can span multiple lines we can only parse it once we have the complete text content of the node.

We also create an outline (tree of headlines / sections) and a map of footnotes and stuff like that during parsing. But that's not really necessary, we could just build those out of the resulting AST - it's just nicer this way.

Once we have our AST we can convert it into different formats using the Writer

interface. I decided to keep the formatting separate of the node interface

(i.e. not add Org() and HTML() methods to each node) to make it easier /

more consistent to add new export formats outside of go-org.